This blog is the third part of the series “Data Migration Simplified.” Learn more about the entire blog series here.

Migrating to the Cloud has become a necessity to thrive in an increasingly digital and remote world. Many businesses have undertaken cloud migration as a part of their digital transformation initiatives. While migrating to the cloud has become much easier with the advancement of tools and technologies, making optimal use of the migrated data is a critical capability that allows businesses to remain competitive. Ensuring that the data has been correctly migrated to the cloud platform is the final litmus test for all projects.

Data accuracy and consistency are imperative when loading data from the source to the destination system – but the challenge arise while validating billions of data points across thousands of tables within strict timelines. In the previous two blogs of this series, we saw the importance of planning and code conversion during migration. In this blog, let’s take a closer look at the importance of validating the migrated data, and how it translates into the success of your migration project.

Automated data validation

With migration projects, there is always a possibility of data loss or corruption. It’s critical to validate if the entire data set has been migrated for both incremental and historical data migration. Incremental loading is much more complicated because each database will have its structure. This requires validating that all the jobs and fields are loaded correctly, and the files are not corrupted.

Manual validation and reconciliation of data across two heterogeneous data stores often open a window for errors. Obvious challenges include

- The lack of cell-level validation

- Risk of losing data during validation

- The need to utilize highly specialized resources

- Lack of confidence to fully decommission legacy solutions

- Manual and sample validation rarely achieve 100% accuracy

- Validating high volume of data is often expensive and time-consuming

Through our years of experience in helping businesses migrate to the Cloud, we have developed a state-of-the-art automated migration product suite, that enables businesses to realize efficient data warehouse modernization and migration. Datametica’s Pelican, the validation tool, allows you to validate any amount of data whenever you want, any number of times.

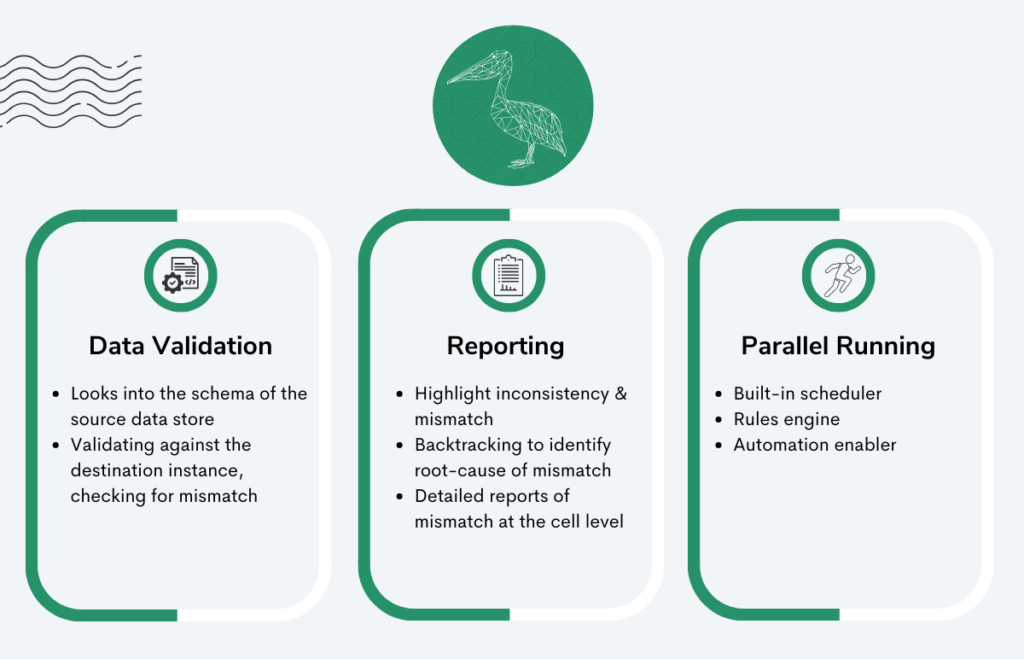

Validating data the right way with Pelican

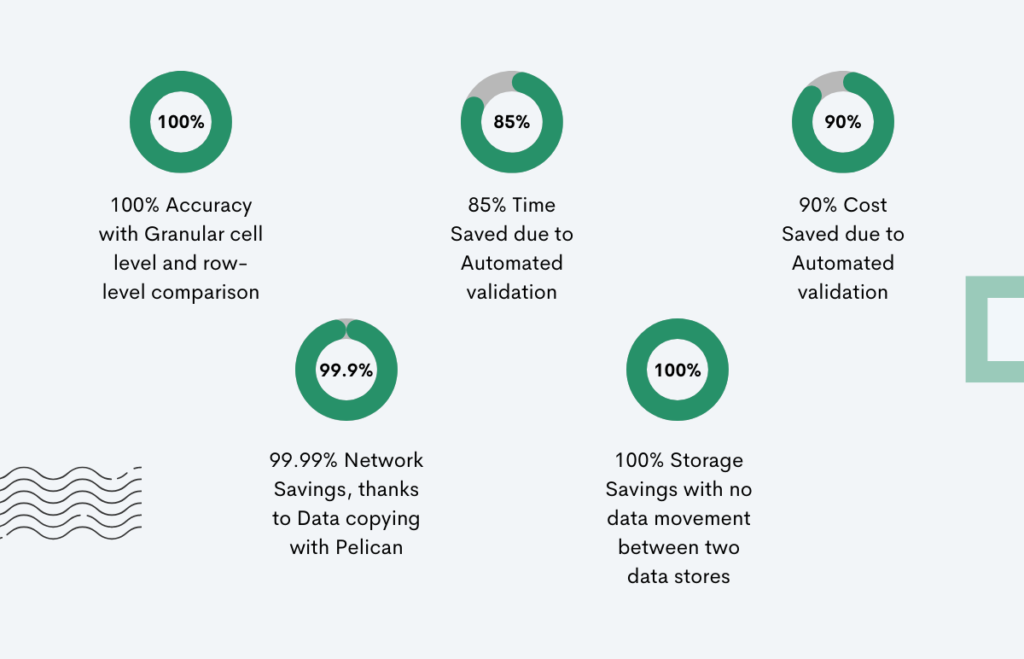

Pelican performs data validation and data migration testing by comparing and reconciling petabyte-scale data at the cell level across the heterogeneous source and target systems with zero data movement. Pelican’s ability for cell-level validation decreases the business risks associated with migration and gives businesses the confidence to decommission the legacy system. Automation replacing manual testing ensures higher cost and time savings during testing. The validator tool doesn’t require writing manual queries, is easy to use, and is driven by UI-based configurations.

———————

See how Pelican replaced 25 BigQuery engineers and saved 90% of the cost for the data validation step in the migration journey of a top U.S retailer.

———————

Why Pelican is the better choice

Pelican can compare datasets across disparate systems without transferring data across the network. As a result, it does not require data storage to keep a copy of the data or large network bandwidth for data transfer. It provides excellent data security, lowers the risks of data loss, and ensures 99.99% network savings. It compares source and target data sets at the cell level, as well as provides exact data on mismatches at the table and row levels. Pelican guarantees 100% accuracy in data quality testing!

With Pelican, a rule engine is available on the top of the validation layer. Pelican also allows businesses to write custom expressions at a column level. Changes in the data format are prevented while it becomes easier to identify which data is similar but not the same.

Additionally, Pelican’s capabilities include:

- providing a detailed report of mismatches,

- visualizing data reconciliation outcomes,

- efficiently validating incremental data,

- faster executions,

- lower load on source and target,

- filtering the data,

- pruning columns, and

- accurately traversing the pipeline lineage and identifying the source of data error.

… and a lot more

- Intelligent orchestration with inbuilt or enterprise scheduler

- Interface to utilize functionalities programmatically

- Metadata validation capabilities

- Inbuilt IAM and work segmentation capabilities

- Detail and trend reporting with dashboard capabilities

- Compatibility with any legacy EDW (Enterprise Data Warehouse) and Cloud platforms

- Implementation of best practices like penetration testing, data encryption, Active directory integration, SSL compatibility, high availability, code migration, etc.

- Deployment options with modern technologies like Kubernetes

Conclusion

A migration project can be considered successful only when the migrated data is validated for loss or corruption and that the data is usable by users in the destination system. Data validation is critical for data and analytics workflows to ensure that only high-quality data is collected and processed. It not only generates dependable, consistent, and accurate data but also streamlines the processes. Many organizations are looking towards automation to achieve easy-to-implement and requirement-compliant validation tests.

Datametica is a global leader in data warehouse modernization and migration and has helped many businesses successfully validate their migrated data. Initiate a conversation with us today to learn more about our migration automation tools.

Niraj Kumar

.

.

.

About Datametica

A Global Leader in Data Warehouse Modernization & Migration. We empower businesses by migrating their Data/Workload/ETL/Analytics to the Cloud by leveraging Automation.

One Comment